Asymptotic Growth of Functions

How algorithms behave as input size approaches infinity — and why it matters for scalability

🔍 What Does "Asymptotic" Mean?

When analyzing algorithms, we don't just care about how they perform for small inputs — we care about how they behave as the input size becomes very large. That's where asymptotic growth comes in.

Asymptotic analysis studies how functions grow as input size n → ∞. In algorithm design, this tells us how runtime or memory usage scales as the problem size increases.

The word asymptotic refers to behavior "in the limit" — meaning:

Instead of focusing on exact runtime values like:

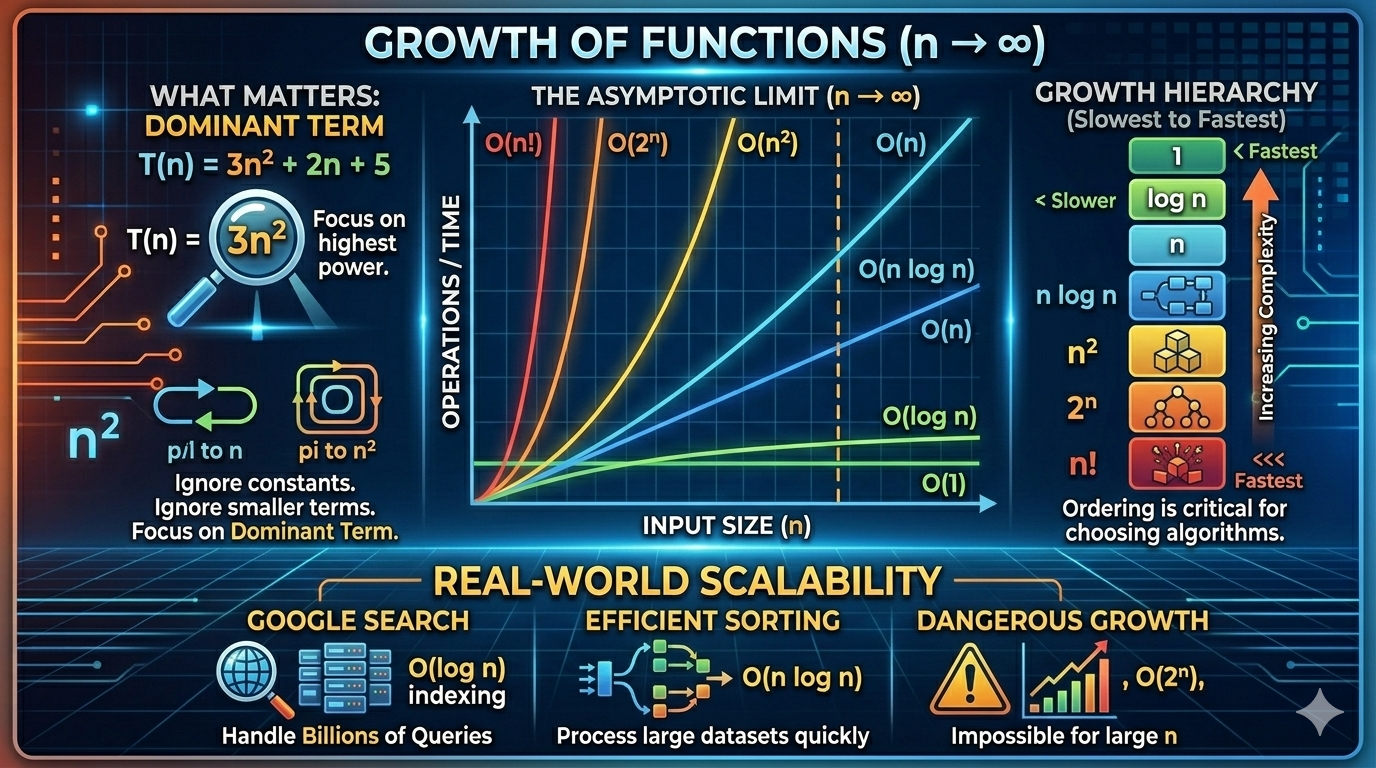

T(n) = 3n² + 2n + 5We focus on the dominant term as n becomes very large. For example:

Because the quadratic term grows much faster than the linear or constant terms.

📈 Why Asymptotic Growth Matters

In real world systems:

- Inputs can grow to millions or billions

- Small inefficiencies multiply quickly

- Scalability depends on growth rate, not small constants

Consider how different growth rates compare:

| n | n | n² | 2ⁿ |

|---|---|---|---|

| 10 | 10 | 100 | 1,024 |

| 100 | 100 | 10,000 | ~10³⁰ |

| 1,000 | 1,000 | 1,000,000 | astronomically large |

Exponential growth quickly becomes impossible to compute. Even for moderate inputs, 2ⁿ produces numbers larger than the number of atoms in the observable universe.

📊 Common Orders of Growth

Here are the most important growth rates in computer science:

🔹 Constant Growth — O(1)

return arr[0]No matter how large n becomes, time does not change.

🔹 Logarithmic Growth — O(log n)

Appears in divide-and-conquer algorithms like binary search. Every step reduces the problem size by half.

while low <= high:

mid = (low + high) // 2🔹 Linear Growth — O(n)

for i in range(n):

print(i)Time increases proportionally with input size.

🔹 Linearithmic Growth — O(n log n)

Common in efficient sorting algorithms:

- Merge Sort

- Heap Sort

🔹 Quadratic Growth — O(n²)

for i in range(n):

for j in range(n):

print(i, j)Used in:

- Bubble Sort

- Selection Sort

🔹 Exponential Growth — O(2ⁿ)

Recursive Fibonacci (without memoization). Extremely inefficient for large inputs.

🔹 Factorial Growth — O(n!)

Appears in:

- Permutation generation

- Traveling Salesman brute force

Factorial growth becomes impossible even for moderate n. At n = 20, n! = 2,432,902,008,176,640,000.

📐 Mathematical Definition of Asymptotic Growth

We compare growth using limits. Let f(n) and g(n) be two functions. We say:

If:

Comparing Growth Using Limits

To determine which function grows faster:

Cases:

- If limit = 0 → f grows slower

- If limit = constant → same growth rate

- If limit = ∞ → f grows faster

Example:

So:

Meaning n grows strictly slower than n².

🏔️ Growth Hierarchy (From Slowest to Fastest)

This ordering is critical when choosing algorithms. Always prefer algorithms with lower growth rates — the difference becomes dramatic at scale.

🧪 Solving Asymptotic Growth Problems

Let's practice.

Example 1: Find Dominant Term

Step 1: Identify highest power → n³

Step 2: Ignore constants

Answer:

Example 2: Compare Two Functions

Which grows faster?

- f(n) = n log n

- g(n) = n1.5

Take limit:

So:

Thus, n1.5 grows faster.

Example 3: Loop Analysis

for i in range(n):

for j in range(i):

print(i, j)Number of executions:

Which simplifies to:

🔑 Key Insights About Asymptotic Growth

- Constants do not matter — we drop them in asymptotic analysis

- Lower order terms do not matter — only the dominant term counts

- Only the dominant term matters — it determines behavior at scale

- Growth rate determines scalability — not raw performance on small inputs

- Exponential algorithms are dangerous for large inputs

🌐 Real-World Impact

- Google Search must handle billions of queries → O(n²) algorithms are impossible

- Databases use O(log n) indexing (B-trees)

- Sorting large datasets requires O(n log n) algorithms

Efficient asymptotic growth is the difference between:

| Scenario | Time |

|---|---|

| Optimal algorithm | 1 second |

| Suboptimal algorithm | 1 hour |

| Terrible algorithm | 100 years |

📝 Final Summary

Asymptotic growth tells us how algorithms behave as input size becomes very large. It helps us:

- Compare algorithms objectively

- Predict scalability before deployment

- Choose efficient solutions for large-scale systems

- Avoid catastrophic performance issues in production

Understanding asymptotic growth is one of the most powerful tools in algorithm design. It transforms guesswork into engineering.