Solving Recurrence Relations

Mastering the techniques behind analyzing divide-and-conquer algorithms — from substitution to the Master Theorem

Many of the most powerful algorithms in computer science — especially divide-and-conquer algorithms — are naturally described using recurrence relations.

If you understand how to solve recurrences, you can determine the time complexity of algorithms like:

- Merge Sort

- Quick Sort

- Binary Search

- Strassen's Matrix Multiplication

- Many Dynamic Programming algorithms

This article explains what recurrence relations are and how to solve them using different techniques.

🔍 What Is a Recurrence Relation?

A recurrence relation is an equation that defines a function in terms of itself. In algorithm analysis, we use recurrences to describe running time.

Example:

This means:

- Solving a problem of size n

- Requires solving a smaller problem of size n − 1

- Plus some extra work

Example: Recursive Sum

def sum_array(arr, n):

if n == 0:

return 0

return sum_array(arr, n-1) + arr[n-1]Running time recurrence:

📈 Why Recurrence Relations Matter

Most recursive algorithms do one of these:

- Reduce problem size by 1

- Divide problem into equal parts

- Divide problem into unequal parts

Each leads to a different recurrence. Understanding recurrences helps us:

- Predict algorithm efficiency

- Compare divide-and-conquer strategies

- Optimize recursive designs

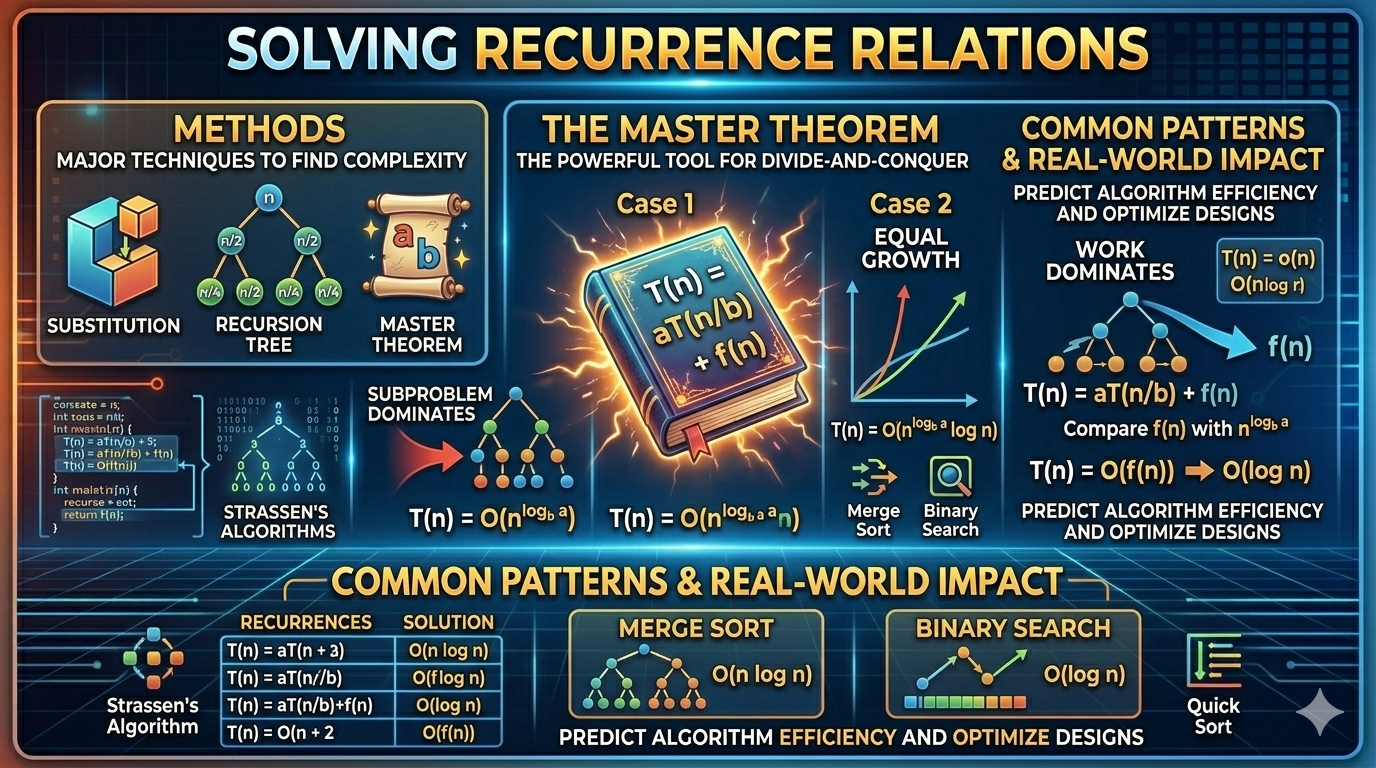

🛠️ Methods to Solve Recurrence Relations

There are three major techniques:

- Substitution Method

- Recursion Tree Method

- Master Theorem

Let's go through them one by one.

📝 Method 1: Substitution Method

This method involves:

- Guessing the solution

- Proving it using induction

Example 1

Expand:

Continuing:

So:

Example 2

Let's guess:

We can verify by substitution.

🌳 Method 2: Recursion Tree Method

This method visualizes recursion as a tree.

Example: Merge Sort

Recurrence:

Step 1: Expand levels.

| Level | Subproblems | Cost per Level |

|---|---|---|

| 0 | 1 × n | n |

| 1 | 2 × n/2 | n |

| 2 | 4 × n/4 | n |

| ... | ... | n |

Each level costs n.

Height of tree:

Total cost:

Final Answer:

⚡ Method 3: Master Theorem

The most powerful tool for divide-and-conquer recurrences. It applies to recurrences of the form:

Where:

- a = number of subproblems

- b = division factor

- f(n) = extra work per level

Compare f(n) with nlogba:

Case 1: f(n) is polynomially smaller

If:

Then:

Case 2: f(n) is equal

If:

Then:

Case 3: f(n) is polynomially larger

If:

Then:

(With regularity condition)

🧪 Master Theorem Examples

Example 1: Merge Sort

Here: a = 2, b = 2, f(n) = n

Compute:

Since f(n) = n, this is Case 2.

Example 2: Binary Search

Here: a = 1, b = 2, f(n) = 1

Compute:

This is Case 2.

Example 3: Strassen's Algorithm

Compute:

Since n² < n2.81, Case 1 applies.

🔄 Iteration Method (Another Useful Technique)

You repeatedly substitute until reaching the base case.

Example

Expand:

Continue — after k steps:

Stop when:

Final:

📊 Common Recurrence Patterns

| Recurrence | Time Complexity |

|---|---|

| T(n) = T(n−1) + 1 | O(n) |

| T(n) = T(n−1) + n | O(n²) |

| T(n) = 2T(n/2) + n | O(n log n) |

| T(n) = 2T(n/2) + n² | O(n²) |

| T(n) = T(n/2) + 1 | O(log n) |

Memorize these common patterns — they appear frequently in interviews and competitive programming.

⚠️ When Master Theorem Does NOT Apply

Master Theorem only works when:

It does NOT apply if:

- Subproblem sizes are unequal

- Division is not constant

- Recurrence is unusual

Example:

This Fibonacci-style recurrence requires the characteristic equation method, not the Master Theorem.

🌐 Real-World Importance

Recurrence solving is used in:

- Algorithm analysis — determining time complexity of recursive solutions

- Compiler optimization — understanding recursive code transformations

- Parallel computing — analyzing divide-and-conquer parallelism

- Machine learning algorithm analysis — recursive model training

- Systems performance modeling — predicting scalability

Without solving recurrences, we cannot understand the scalability of recursive algorithms.

📝 Final Summary

Recurrence relations describe recursive algorithm performance. We solve them using:

- Substitution Method — guess and prove by induction

- Recursion Tree Method — visualize as a tree and sum levels

- Master Theorem — direct formula for divide-and-conquer

- Iteration Method — expand until base case

The key skill is recognizing the pattern and choosing the correct method.

Mastering recurrence relations is essential for:

- Competitive programming

- Technical interviews

- Algorithm research

- Advanced computer science