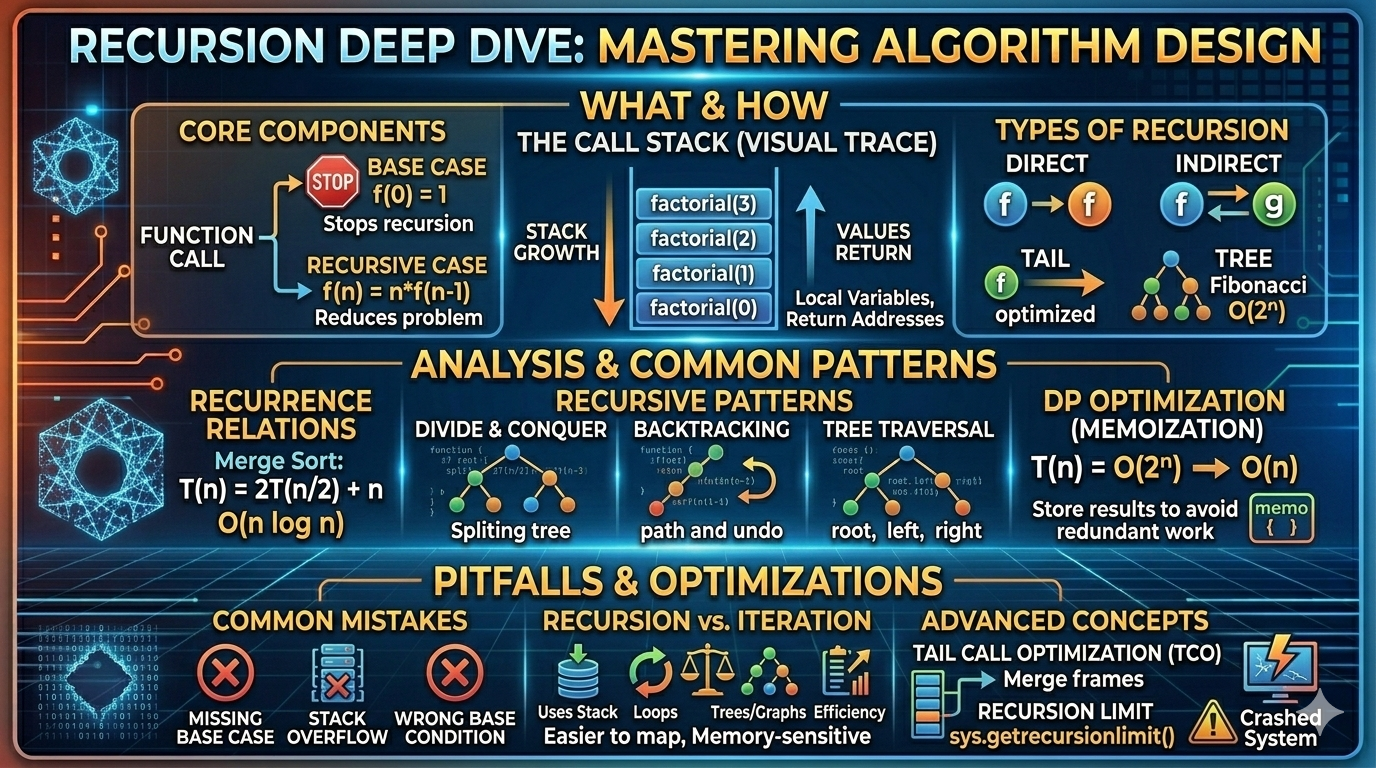

Recursion Deep Dive

From base cases to call stacks — a complete guide to recursive thinking, complexity analysis, and the patterns that power modern algorithms.

Recursion is one of the most elegant and powerful ideas in computer science. At its core, recursion means a function solving a problem by calling itself on smaller inputs.

From tree traversals and divide-and-conquer algorithms to dynamic programming and backtracking, recursion appears everywhere in algorithm design. In this deep dive, we'll explore what recursion really is, how the call stack works, the major types of recursion, complexity analysis, common patterns, pitfalls, and optimizations.

What Is Recursion?

A recursive function has two essential components:

- Base Case — stops the recursion

- Recursive Case — reduces the problem toward the base case

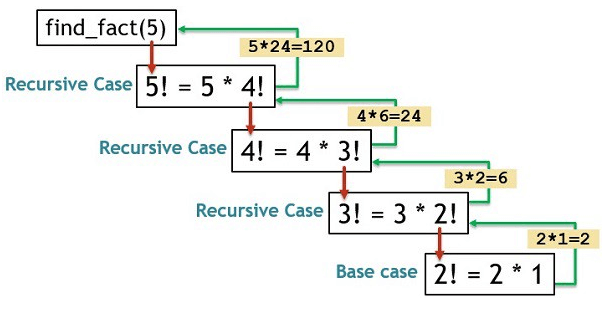

Basic Example: Factorial

The mathematical definition:

Base case: 0! = 1

def factorial(n):

if n == 0:

return 1

return n * factorial(n-1)Each call reduces the problem size by 1 until reaching 0, at which point the recursion unwinds and results are combined.

How the Call Stack Works

When a recursive function is called:

- A new stack frame is created

- Local variables are stored in that frame

- Execution pauses until the recursive call returns

Tracing factorial(3)

factorial(3)

→ 3 * factorial(2)

→ 3 * (2 * factorial(1))

→ 3 * (2 * (1 * factorial(0)))

→ 3 * (2 * (1 * 1))

→ 6Each call waits for the next one to finish. The stack grows on each call and shrinks as calls return.

💡 Key Insight

Recursion uses implicit stack memory. Every active call occupies a frame on the call stack, which directly affects space complexity.

Types of Recursion

🔹 1. Direct Recursion

A function calls itself directly.

def countdown(n):

if n == 0:

return

countdown(n-1)🔹 2. Indirect Recursion

Two or more functions call each other, forming a cycle.

def A(n):

if n <= 0: return

B(n-1)

def B(n):

if n <= 0: return

A(n-1)🔹 3. Tail Recursion

The recursive call is the last operation in the function — nothing happens after it returns. This allows compilers to optimize away stack frames.

def factorial(n, acc=1):

if n == 0:

return acc

return factorial(n-1, n*acc)Tail recursion can be optimized into iteration (Tail Call Optimization) in languages that support it, eliminating stack growth entirely.

🔹 4. Tree Recursion

A function makes multiple recursive calls per invocation, branching like a tree.

def fib(n):

if n <= 1:

return n

return fib(n-1) + fib(n-2)Recurrence relation:

Time complexity: O(2ⁿ) — exponential without memoization.

Recursion vs Iteration

| Recursion | Iteration |

|---|---|

| Uses call stack | Uses loops |

| Cleaner for trees & graphs | Memory efficient |

| Easier mathematical mapping | Often faster in practice |

| Risk of stack overflow | No recursion depth limit |

Iterative Factorial

def factorial(n):

result = 1

for i in range(1, n+1):

result *= i

return resultAnalyzing Recursive Time Complexity

Recursive algorithms are analyzed using recurrence relations. The recurrence describes the algorithm's work in terms of smaller subproblems.

Linear Recursion

def print_numbers(n):

if n == 0:

return

print_numbers(n-1)

print(n)Recurrence:

Solution: O(n) — one unit of work per level, n levels deep.

Divide and Conquer — Merge Sort

Solution (Master Theorem Case 2): O(n log n)

📊 Common Recurrences and Their Solutions

T(n) = T(n−1) + 1 → O(n) |

T(n) = T(n/2) + 1 → O(log n) |

T(n) = 2T(n/2) + n → O(n log n)

Space Complexity of Recursion

The space complexity of a recursive function is determined by the maximum depth of the call stack — how many frames are alive at the same time.

Linear Depth

def f(n):

if n == 0:

return

f(n-1)Depth = n, space complexity: O(n)

Logarithmic Depth

Binary search halves the problem each call:

Tree recursion like fib(n) has depth O(n) (the deepest branch) even though total nodes are exponential. Space complexity tracks depth, not total calls.

Common Recursive Patterns

🔹 1. Divide and Conquer

Split the problem into independent subproblems, solve recursively, combine results.

- Merge Sort — split, sort halves, merge

- Quick Sort — partition around pivot, recurse

- Binary Search — eliminate half the space each step

🔹 2. Backtracking

Explore all possibilities and undo choices when they lead to dead ends.

- N-Queens

- Sudoku Solver

- Generating all permutations

def backtrack(state):

if goal(state):

record_solution()

return

for choice in choices:

make_choice()

backtrack(new_state)

undo_choice() # key: revert state🔹 3. Tree Traversal

Process every node in a tree structure recursively.

def preorder(root):

if root is None:

return

print(root.val) # process current node

preorder(root.left) # recurse left

preorder(root.right) # recurse right🔹 4. Memoized Recursion (Top-Down DP)

Cache subproblem results to avoid redundant computation.

memo = {}

def fib(n):

if n in memo:

return memo[n]

if n <= 1:

return n

memo[n] = fib(n-1) + fib(n-2)

return memo[n]Time complexity improves from O(2ⁿ) to O(n) — each subproblem is solved exactly once.

Mathematical View of Recursion

Recursion is the computational counterpart of mathematical induction. Both share the same structure:

- Base case — verify the property holds at the start

- Inductive step — show that if it holds for smaller input, it holds for current input

If your function handles the base case correctly and correctly assumes the recursive call works on smaller inputs, it works for all valid inputs. This is the formal basis for proving recursive algorithms correct.

Common Recursion Mistakes

- ❌ Missing base case — recursion never stops

- ❌ Wrong base condition — returns incorrect value, corrupting all results above it

- ❌ Stack overflow — depth exceeds system limit

- ❌ Recomputing values — exponential time without memoization

- ❌ Infinite recursion — recursive case doesn't actually reduce the problem

⚠ Always verify two things before finalizing a recursive solution:

1. Does the base case return the correct value? 2. Does each recursive call move closer to the base case?

When NOT to Use Recursion

Recursion is not always the right tool. Prefer iteration when:

- Recursion depth is extremely large (millions of calls)

- Memory is constrained — each frame consumes stack space

- The problem is naturally iterative — simple linear scans

- Performance is critical — function call overhead accumulates

Example: processing a linked list of 10 million nodes recursively risks stack overflow. An iterative loop handles it in constant space.

Recursion in Real Systems

Recursion powers real-world software across many domains:

- Compilers — parsing and evaluating syntax trees

- Operating systems — process trees, signal propagation

- File system traversal — walking directory trees

- AI search algorithms — minimax, alpha-beta pruning

- Graphics — fractals, ray tracing, space partitioning

- Database query engines — recursive CTEs, query plan optimization

Advanced Concepts

🔹 Tail Call Optimization (TCO)

Languages like Scheme, Elixir, and Scala reuse the current stack frame for tail calls instead of creating a new one. This allows infinite tail-recursive loops without stack overflow. Python and Java do not implement TCO.

🔹 Mutual Recursion

Functions depend on each other — A calls B, B calls A. Common in parsers and state machines. Requires careful base cases across all participating functions.

🔹 Structural Recursion

The recursive structure mirrors the data structure being processed. Common in functional programming languages like Haskell.

🔹 Generative Recursion

The subproblem size isn't simply n−1 or n/2 — it's generated dynamically. Examples include fractals, quicksort (partition size varies), and certain graph algorithms.

Recursion Depth and Stack Overflow

Every system enforces a recursion limit. In Python, the default is 1000 frames:

import sys

print(sys.getrecursionlimit()) # default: 1000

sys.setrecursionlimit(10000) # increase with caution⚠ Raising the recursion limit is a temporary workaround

Deep recursion can crash programs with a RecursionError (Python) or StackOverflowError (Java/C++). The real fix is redesigning the algorithm.

Solutions to Stack Overflow

- Convert to iteration — replace the implicit call stack with an explicit stack data structure

- Use tail recursion — in languages with TCO support

- Use trampolining — simulate TCO in languages without it

Final Summary

Recursion is a method of solving problems by breaking them into smaller versions of themselves. It is:

- Closely tied to mathematical induction

- Elegant but memory-sensitive due to stack usage

- Essential for divide-and-conquer and backtracking

- Analyzable through recurrence relations

✅ To master recursion:

✔ Understand the call stack deeply

✔ Always define the base case clearly

✔ Write the recurrence relation before coding

✔ Account for space complexity (stack depth)

✔ Use memoization when subproblems overlap