Logarithms & Series in Algorithm Analysis

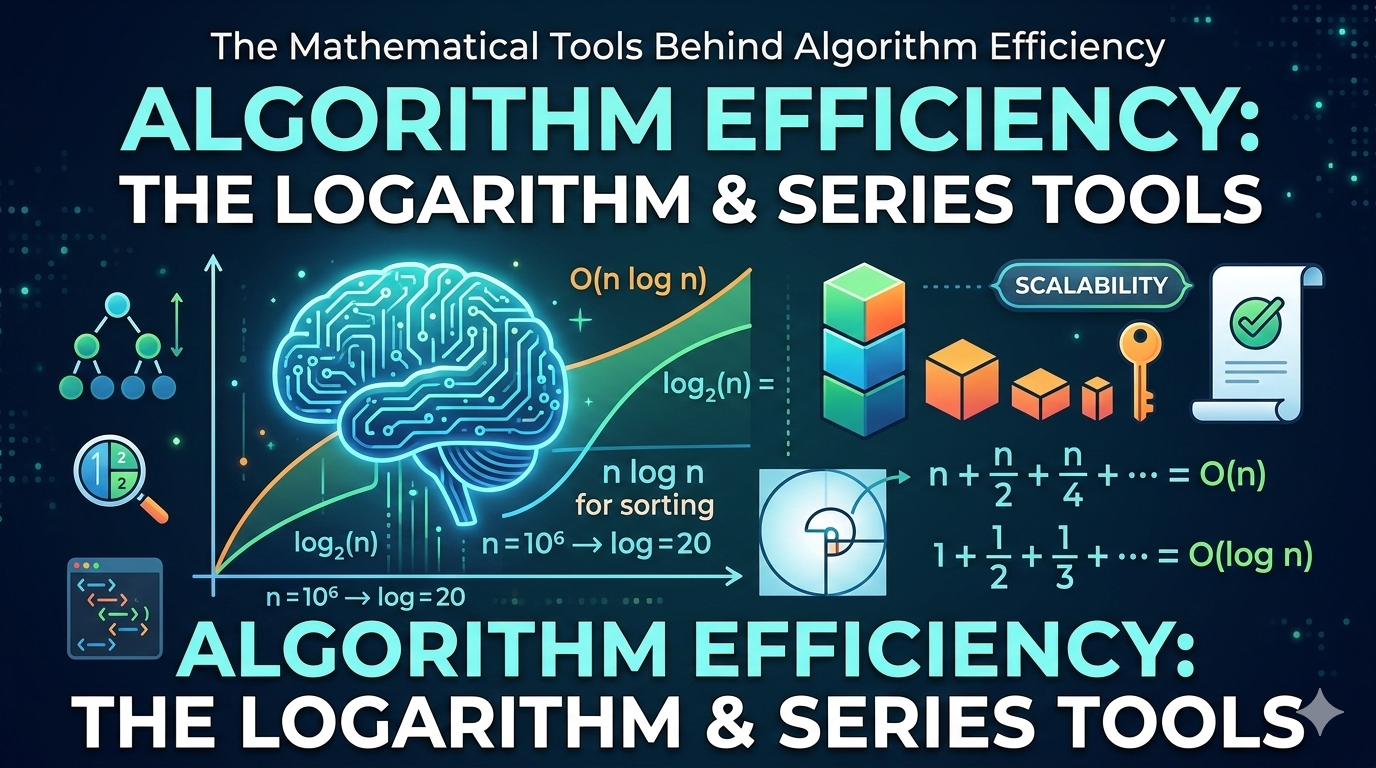

The Mathematical Tools Behind Algorithm Efficiency

When analyzing algorithms, especially their time complexity, two mathematical tools appear again and again: logarithms and series (summations).

They help us:

- Solve recurrence relations

- Analyze loops

- Compare growth rates

- Derive tight asymptotic bounds

If you understand logarithms and series well, algorithm analysis becomes dramatically easier.

Why Logarithms Matter in Algorithms

Logarithms typically appear when:

- The problem size is repeatedly halved

- We use divide and conquer

- Data structures are tree-based

- We use binary search

Logarithm Basics

Most common bases in algorithms:

- Base 2 → binary systems

- Base e → mathematical analysis

- Base 10 → rarely used in algorithm theory

Important Logarithm Rules

Change of base:

In asymptotic analysis:

Base doesn't matter for Big-O.

Why Logarithms Appear in Algorithms

Binary Search Example

Each step halves the array. After k steps:

Solve:

So binary search runs in:

Tree Height

In a balanced binary tree:

Thus operations like search, insert, and delete take:

Comparing Growth Rates Using Logarithms

Growth rate order:

Logarithms grow extremely slowly. Example:

That's tiny compared to 1,000,000 — which is exactly why O(log n) algorithms scale to enormous inputs.

Series (Summations) in Algorithm Analysis

Series appear when:

- Analyzing loops

- Counting operations

- Expanding recurrence trees

- Computing total cost

Arithmetic Series

Form:

Formula:

Asymptotic growth: Θ(n²)

Example: Nested Loop

for i in range(n):

for j in range(i):

print("Hello")Total operations:

Geometric Series

Form:

Formula:

Important Case in Algorithms

When r = 1/2:

So: Θ(n)

Example: Recursion Tree for Merge Sort

Each level costs n:

- Level 0 → n

- Level 1 → n

- Level 2 → n

- ⋯

Number of levels: log n. Total cost:

Harmonic Series

Form:

Growth: Θ(log n)

Example: Analysis of QuickSort (Average Case)

Expected comparisons involve:

Logarithmic Series in Algorithms

Example: Doubling Loop

i = 1

while i < n:

i *= 2Values of i: 1, 2, 4, 8, 16, …

Stops when 2k = n, so k = log2 n.

Common Summations to Memorize

1. Constant

2. Linear

3. Squares

4. Geometric

5. Harmonic

Infinite Series & Convergence

Some algorithms produce infinite geometric series. If |r| < 1:

This is used in amortized analysis and probabilistic analysis to show that an infinite sum still converges to a constant or linear bound.

Using Integrals to Approximate Series

For large n:

Example:

So the harmonic series grows like log n — confirmed by integral approximation.

Putting It All Together

When analyzing an algorithm, follow these steps:

Step 1: Identify Pattern

- Is it halving? → Logarithm

- Is it accumulating? → Series

- Is it recursive? → Recurrence + series

Step 2: Convert to Mathematical Form

Write the cost as a summation.

Step 3: Apply Known Formula

Use arithmetic, geometric, or harmonic rules to close the sum and get an asymptotic bound.

Real Algorithm Examples

| Algorithm | Mathematical Tool Used |

|---|---|

| Binary Search | Logarithms |

| Merge Sort | Geometric + Logarithm |

| QuickSort (average) | Harmonic series |

| Heap operations | Logarithms |

| Dynamic array resizing | Geometric series |

Why This Matters in Real Systems

Understanding logarithms and series helps you:

- Predict scalability

- Avoid quadratic explosions

- Design efficient distributed systems

- Analyze database indexing

- Optimize machine learning pipelines

A system that runs in O(log n) instead of O(n) scales to billions of users. A system that runs in O(n²) instead of O(n log n) may be unusable at scale.

Final Summary

Logarithms appear when problems shrink multiplicatively. Series appear when costs accumulate.

| Pattern | Result |

|---|---|

| Halving each step | log n |

| Nested linear loops | n² |

| Divide & conquer | n log n |

| Harmonic sums | log n |

| Geometric sums | Linear (Θ(n)) |

Mastering logarithms and series transforms algorithm analysis from guesswork into mathematics.