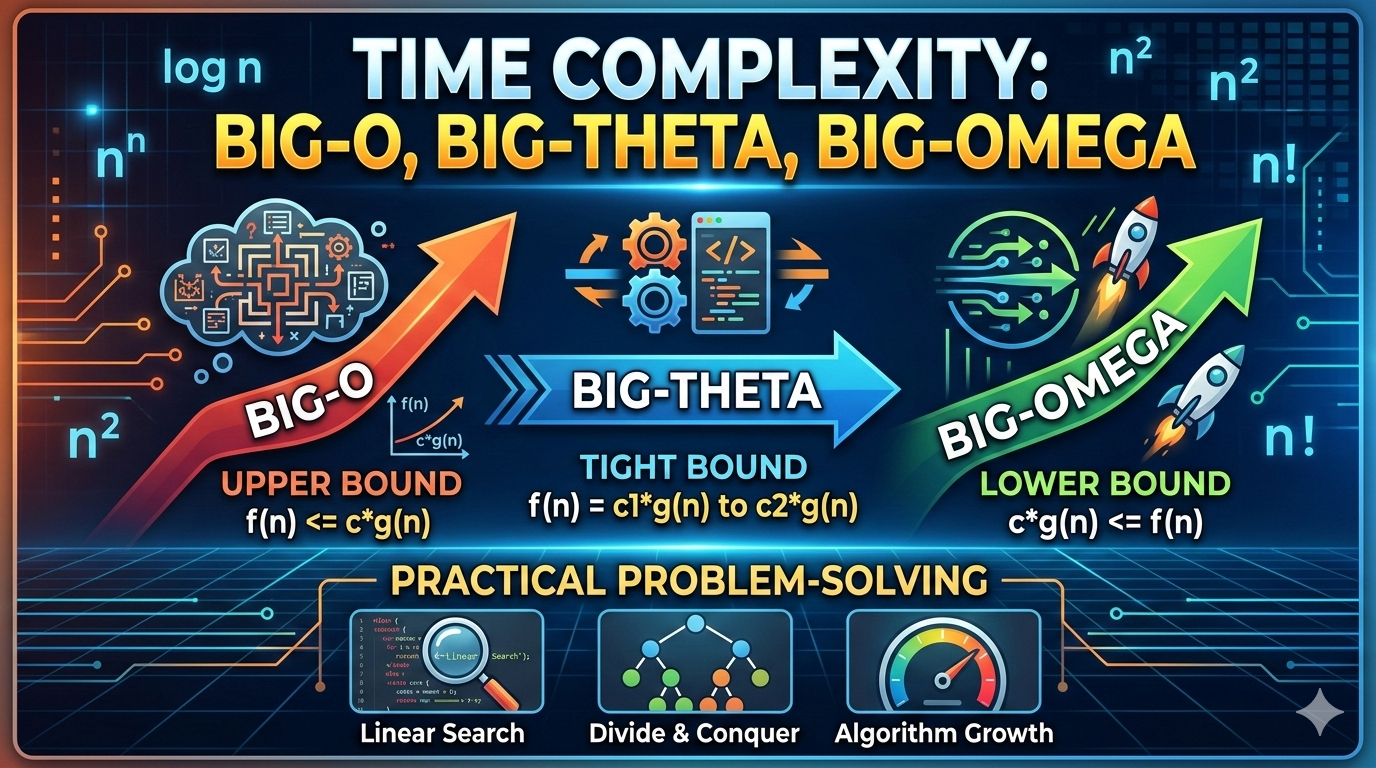

Time Complexity: Big-O, Big-Theta, and Big-Omega

Understanding Algorithm Growth with Practical Problem-Solving Examples

Introduction

When analyzing algorithms, we don't just ask:

"Does it work?"

We ask:

"How does it perform as input grows?"

Time complexity measures how an algorithm's running time increases relative to input size n.

The three fundamental notations used to describe this growth are:

- Big-O (O) → Upper bound

- Big-Theta (Θ) → Tight bound

- Big-Omega (Ω) → Lower bound

Understanding these helps you predict performance before writing production code.

Why We Ignore Constants

If an algorithm runs in:

5n + 20

As n becomes very large, constants and smaller terms become insignificant.

So we simplify:

5n + 20 → O(n)

We focus only on the dominant term.

Big-O Notation (Upper Bound)

Definition

Big-O describes the worst-case growth rate of an algorithm.

It answers:

What is the maximum time this algorithm could take?

Example: Linear Search

def linear_search(arr, target):

for i in range(len(arr)):

if arr[i] == target:

return i

return -1Step-by-step Analysis:

- Loop runs up to

ntimes - Each comparison is constant time

O(1) - Total operations ≈

n

Therefore: O(n)

Worst case: element is at the end or not present.

Example: Nested Loop

for i in range(n):

for j in range(n):

print(i, j)- Outer loop →

n - Inner loop →

n

Total operations: n × n = n²

Time Complexity: O(n²)

Big-Theta Notation (Tight Bound)

Definition

Big-Theta describes the exact growth rate when upper and lower bounds are the same.

It answers:

What is the actual growth behavior?

Example

for i in range(n):

print(i)

This always runs n times.

- Best case =

n - Worst case =

n

So we say: Θ(n)

Because it is exactly linear.

Big-Omega Notation (Lower Bound)

Definition

Big-Omega describes the best-case growth rate.

It answers:

What is the minimum time this algorithm will take?

Example: Linear Search (Best Case)

If the element is at index 0:

- Only 1 comparison.

Ω(1)

But worst case remains: O(n)

Best case → Ω(1)

Worst case → O(n)

Combined Example

Consider this function:

def example(arr):

print(arr[0])

for i in range(len(arr)):

print(arr[i])Step-by-step:

- First print →

O(1) - Loop →

O(n)

Total: O(1 + n)

Drop constant: O(n)

Since it always runs n times: Θ(n)

How to Solve Time Complexity Problems

Here is a structured method:

Step 1: Count Loops

Each loop usually represents O(n) unless modified.

for i in range(n):

→ O(n)

Step 2: Check Nested Loops

for i in range(n):

for j in range(n):

→ O(n²)

Step 3: Check Dividing Loops (Logarithmic)

while n > 1:

n = n // 2

Each step halves n.

Number of times you can divide by 2: log₂(n)

So: O(log n)

Step 4: Combine Independent Parts

for i in range(n):

print(i)

for j in range(n):

print(j)

O(n) + O(n) = O(2n)

Drop constant: O(n)

Practice Problems with Solutions

Problem 1

for i in range(n):

for j in range(i):

print(i, j)

Inner loop runs i times.

Total operations:

1 + 2 + 3 + ... + (n - 1)

This sum equals: n(n - 1) / 2

Which simplifies to: O(n²)

Problem 2

i = 1

while i < n:

i = i * 2

Sequence: 1 → 2 → 4 → 8 → 16 → ...

Number of steps: log₂(n)

So: O(log n)

Problem 3

for i in range(n):

print(i)

for j in range(n*n):

print(j)- First loop →

O(n) - Second loop →

O(n²)

Total: O(n + n²)

Dominant term: O(n²)

Growth Rate Comparison

From fastest to slowest:

O(1) < O(log n) < O(n) < O(n log n) < O(n²) < O(2ⁿ) < O(n!)

As n increases, higher complexity grows dramatically faster.

Real-World Impact

If n = 1,000,000:

O(n)→ 1,000,000 operationsO(log n)→ ~20 operationsO(n²)→ 1,000,000,000,000 operations

This is why choosing the right algorithm matters.

Key Takeaways

- Big-O → Worst case

- Big-Theta → Exact bound

- Big-Omega → Best case

- Drop constants

- Focus on dominant term

- Nested loops multiply

- Dividing loops give

log n

Time complexity analysis helps you predict scalability before systems fail.